Editor's note: The following is a guest article from Charla Griffy-Brown, PhD professor of information systems at Pepperdine Graziadio Business School.

In Charles Dickens' 1843 novel "A Christmas Carol," the Ghost of Christmas Present confronts Ebenezer Scrooge with two wretchedly miserable children peering out from the bottom of his flowing robe.

Says the Ghost, "This boy is Ignorance. This girl is Want. Beware them both, and all of their degree, but most of all beware this boy, for on his brow I see that written which is Doom, unless the writing be erased."

Dickens — whose early years were spent living in poverty — was a fierce crusader against a system that was largely unaware of, and indifferent to, the state of society's disenfranchised. To him, "Want" represented society's pressing needs, but "Ignorance" prevented systemic improvement in the social state.

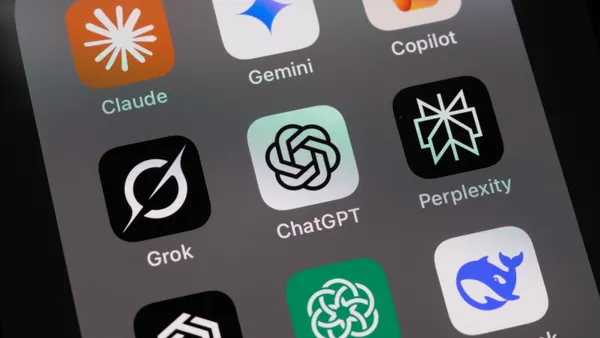

Today, artificial intelligence (AI) holds tremendous potential to positively impact virtually every segment of society. Yet AI is only as good as the design of the cognitive algorithm and the data upon which the reasoning is based.

Even though users "want" a self-teaching system that can reason as well as or better than humans, if the design is faulty or the data biased, AI will perpetuate the bias. It could further an "ignorance" that stalls societal advancement or the ability to address significant needs such as climate change, a more equitable criminal justice system, or healthcare.

Omnipresent bias

There's little question that the bias exists. Facial recognition software is overwhelmingly more successful at identifying white men.

An analysis of an AI software system used by judges to set parole found black defendants were far more likely than white defendants to be incorrectly targeted for high risk of recidivism. Software for predictive policing has been criticized as disproportionately targeting black and Latinx communities.

In hospitals, researchers discovered algorithmic bias resulted in black patients being less likely than white patients to get extra medical help, despite being sicker. AI deployments in filtering resumes for hiring practices have largely excluded women.

In all of these cases, the AI was not intended to produce these results, but instead was developed based on data biases built into the methodology and the data, exacerbating problems it was designed to address.

Technology companies in California — known for its desire for inclusiveness and ground zero of technological innovation — should take the lead on ensuring that AI is free of bias. But the state's technology sector may be part of the problem.

Data aggregators that monetize information, including sites like Google, Amazon, Facebook, and Twitter, control much of the data that fuels the majority of the AI currently in use.

Importantly, the decisions around how the data is used, the terms and agreement for consent, and all issues related to data privacy are made by the fiduciary governance of these corporations — not through public discourse.

The best way to eliminate bias in AI and ensure its ethical deployment is to insist on diversity in AI designers and diversity in AI decision-making.

Yet there is very little diversity at many of these companies.

According to a 2018 report from the National Center for Women & Information Technology, only one-quarter of all technology-related positions are held by women.

At Apple, although new hiring trends show promise, the percentage of black technical workers falls below their representation of about 13% of the overall U.S. population. Hispanic workers comprise only about 7% of computer and mathematical occupational employees, according to the Brookings Institute.

In the United States, only 24% of all companies have women as part of their corporate board of directors. While boards have made some advancements in terms of gender diversity, many companies suggest that gains are slow in other areas of social diversity (e.g. race/ethnicity, age, gender).

Importantly, in addition to social diversity, professional diversity, coming from backgrounds other than CFO or CEO, such as CTO, HR, cybersecurity or risk have been found to have a positive impact on firm performance.

Tackling bias by design

When diversity and oversight aren't baked into the system, bias occurs. In the design processes, diversity will ensure different questions are asked at the different stages, from methodological framing to data collection and processing, in order to limit bias.

Diversity in design and decision-making will also ensure a more transparent system.

Early AI in human resources was associated with bias because the pool of data the AI drew up mostly consisted of men. Unintentionally, the system downgraded resumes that highlighted "women's" achievements or touted soft leadership traits related to communication and emotional intelligence.

Similarly, some AI systems intended to advance speech and language recognition, translation and understanding fail to incorporate multicultural and linguistical idioms, limiting the algorithm's predictive ability to advance the interests of individuals who commonly use them in communication.

Developers must carefully curate data sets to identify relevant areas of concern, ensure there is representative balance in the data set, and test AI algorithms against a broad range of potential biases.

Additionally, diversity in executives and decision-making ensures a 10,000-foot understanding of the risk of bias not just to the public, but the organization's bottom line.

Google, for example, just announced the purchase of fitness tracker Fitbit as part of its foray into an AI-driven health and wellness platform. But Fitbit purchasers are overwhelmingly female, between the ages of 35-44, with household incomes over $100,000.

Savvy executives will not ignore potential bias related to a niche, homogeneous dataset, as it could imperil business initiatives related to health and wellness initiatives.

Until the problems with design and development are addressed by AI companies, their innovations cannot be relied upon for bias-free computing. This is not a theory, but a certitude.

Technology can either amplify our best or our worst qualities. It can also accelerate the grand challenges we face or accelerate our capabilities in finding solutions to the challenges.

Last year, California's Little Hoover Commission released a report, "Artificial Intelligence: A Roadmap for California," challenging the state to adopt an AI policymaking agenda to advance governance and reshape society.

If California hopes to achieve the aspirational social state identified in that report, its technology industry needs to double down on its efforts to eradicate "ignorance," also known as data and design bias, so we can address "want" or social needs as we deploy the emerging technologies that will continue to transform our lives.