The benefits of a coordinated and well-planned Big Data program have been proven time and again. But implementing Big Data projects is not easy. Just 13% of organizations achieve full-scale production for their in-house Big Data projects, according to a recent study by Qubole.

By understanding some of the biggest Big Data obstacles, companies can prepare ways to avoid them.

The following are five roadblocks found when taking on Big Data projects that are likely to stand between companies and success in the digital age:

Biting off more than you can chew

Big Data projects often run into hurdles when a company tries to reinvent its core IT systems — a multiyear effort that can run to hundreds of millions of dollars, according to a recent Boston Consulting Group (BCG) report.

Not only do "massive centralized efforts" take too long, they're also a waste of money, according to report authors Antoine Gourévitch and Lars Fæste at BCG.

But companies can avoid projects that would require fundamental changes in data handling and instead adopt a "use-case-driven" Big Data approach in which the architecture evolves to meet the requirements of each new initiative, according to the report. So instead of a project taking years, implementations can be reduced to months. And return on investment comes quicker too.

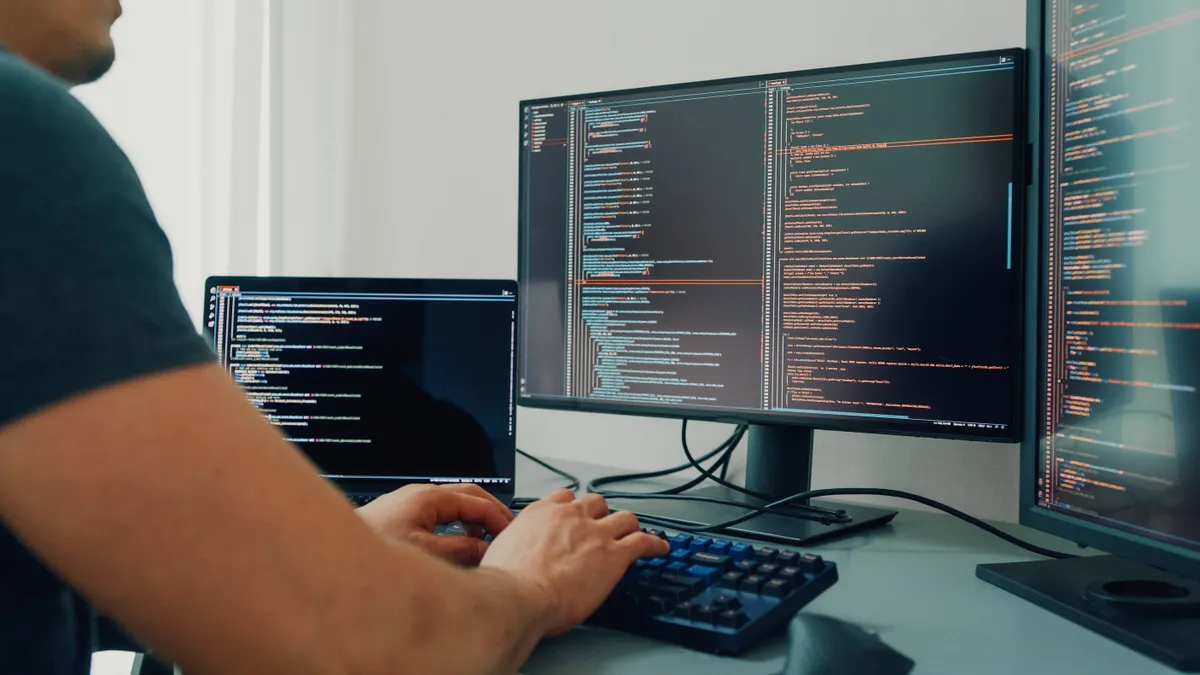

Lack of talent

The digital world is exploding. As businesses race to collect and analyze data, many organizations are paralyzed by the complexity of their infrastructure and technologies.

To address this problem, many organizations attempt to hire experts, but hit a wall due to an industry shortage of talent. More than one-third of respondents said they are having difficulty finding people with expertise in Big Data projects, according to a recent report by Dimensional Research and Qubole.

"I hear many enterprises complain that their Big Data project has stalled or never got off the ground because they could not find the right people to implement or project," said Alex Lesser, vice president at PSSC Labs.

Even if an organization has a skilled team in place, many of today’s data teams get bogged down with the manual efforts that come with maintaining Big Data infrastructure.

"Big Data projects are failing because they are reliant on highly skilled data teams manually turning the crank to keep the infrastructure working," said Ashish Thusoo, CEO and co-founder at Qubole. "Organizations cannot count on simply adding personnel to their data teams. Instead they should be finding the tools to help their data teams work more effectively."

By leveraging automation and the cloud, said Thusoo, data teams can focus on high-value work of scaling and operationalizing data initiatives instead of worrying about hardware and software infrastructure.

"With the cloud and machine learning, time consuming tasks like capacity planning and software updates can be seamlessly automated," he said.

Combining new and legacy data

New data sources, such as sensors or mobile devices, are easily captured in modern enterprise data hubs. But businesses also need to reference critical customer or transaction history data to make sense of these newer sources.

"That data is often stored on legacy platforms such as mainframes, and can be some of the most difficult to access and integrate," said Tendu Yogurtcu, chief technology officer at Syncsort. "Without it, businesses will have an incomplete picture, and won’t have confidence in the accuracy of their data and the insights it provides."

Achieving a 360-degree customer view

Access to and integration of an increasing variety of data sources also makes ensuring a single view of a customer a challenge. Businesses must catalogue customer data and create business rules to validate, match and cleanse the data.

They must then add missing information from third-party databases to create a complete picture of the customer.

"Not only does this make it easier to provide personalized information to best serve customer needs, it also improves customer loyalty," said Yogurtcu. "It provides insights into demographics, customers’ appetite for risk, preferred products and more, so businesses can target the right customers with the right offers."

Avoiding risk

In terms of company culture, for data-based transformations to work, everyone needs to adopt a less risk-averse attitude.

Companies have to move more like software development operation to find new ways to apply data, according to the BCG report. That means creating an environment that fosters experimentation.

At the same time, IT leaders need to ensure those risks are calculated. Digital transformation "winners" will need to be "agile, pragmatic and disciplined," according to the report.

So while moving quickly with adoption and trying new things, organizations need to create a roadmap to ensure approaches to data are optimized and create long-term value.