In the thirteenth century, Italian papermakers varied the thickness of paper along a pattern to embed designs within, which identified the manufacturers or owners and provided protection against copycats and thieves.

The term "digital watermark" was coined in the 1990s, and in the decades since the technology has spread across multimedia to provide authentication, identification, tracking and copyright protections to creators. With a few seconds to spare and an internet connection, any amateur can embed a watermark into their work.

But digital watermarks today are protecting far more than music and stock photos: They can be embedded into code to extend the same safeguards to technology. For deep neural networks (DNN), an in-demand subset of artificial intelligence, watermarking technology can help protect the models that are becoming the "oil of this century," according to Marc Ph. Stoecklin, manager of Cognitive Cybersecurity Intelligence at IBM Research, in an interview with CIO Dive.

DNNs and other AI algorithms are cumbersome and resource-intensive to build, demanding copious amounts of training data, expertise and compute power, he said. With the rise of model markets, in which developers upload models to monetize or make open source, claiming ownership and protecting the time and monetary investment in models from misuse or fraud is important.

In 2017, NYU researchers published research on vulnerabilities in machine learning models and the dangers in outsourcing model training. They found that backdoored classifiers could interfere with the performance of a neural network. For example, putting a yellow Post-it note or the image of a bomb or flower on a stop sign could trigger the backdoor in a neural network and change the generated label from that of a stop sign to another traffic sign.

Even when retrained for a different task, the backdoor could persist in models and reduce accuracy by an average of 25%, according to the research. In the hands of malicious actors, the implications of such backdoors in AI systems that intersect with consumer, enterprise and national security are serious.

Inspired by this research, Stoecklin and his colleagues realized that a similar technique could be used to protect DNNs by hiding a response pattern that the creator would control. The attack could become the defense, according Ian Molloy, principal research staff member and manager Cognitive Security Services.

How it works

When a photograph is watermarked, the owner embeds a word, symbol or image on top of the image, which can later be extracted to prove ownership in cases of actual or suspected misuse or theft. Watermarking AI models would function in much the same way, although by discreetly slipping a watermark into a DNN — ideally without others ever realizing it is there, said Molloy.

Stoecklin and Molloy's research suggested the use of an API, or application programming interface, as a watermark. This could take the form of meaningful content combined with the training data, irrelevant data samples or noise, according to the company's blog post.

Watermarking DNN works well with algorithms that have many degrees of freedom within which to embed something and algorithms that can be interacted with, according to Stoecklin. An image recognition algorithm, for example, is difficult to prune through to identify the watermark and, because images are submitted and a response is returned, it is easy to test the model to see if the watermark is triggered.

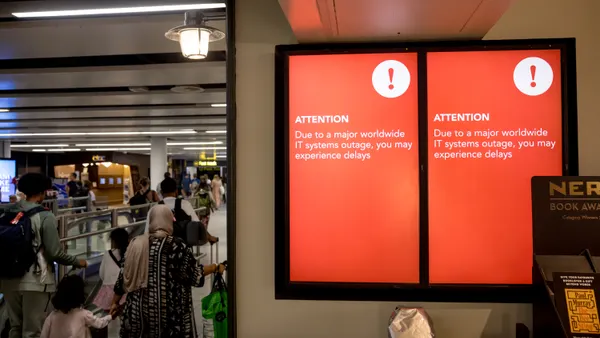

If a DNN under suspicion of misuse or theft is used in a service online or in a product, the backdoor trigger could be submitted to test it and determine if it is the same model. This principle also works well with DNNs for text classification, speech to text systems or similar modalities.

Algorithms without clear systems of interaction are more difficult to test. The DNN may be deeply embedded in the product, thereby blocking direct input submission to the algorithm.

The AI space moves rapidly, so predicting whether this tool will take off and how long that would take is near impossible. But just like watermarking photos, watermarking DNNs is a tool that developers can make the personal decision to use without everyone in the market also having to adopt, according to Stoecklin.

Watermarking models do have some limitations: Theft cannot be detected on models deployed in offline services, and watermarking cannot protect against theft by prediction APIs, according to the post.

Nevertheless, early stage protection mechanisms, such as this watermarking tool, are setting the groundwork for important IP and copyright protections for technologies that are built and move through the market quickly.