Dive Brief:

- The Center for AI Standards and Innovation at the National Institute of Standards and Technology has entered into agreements with Google DeepMind, Microsoft and xAI to conduct pre-deployment evaluations and research to assess the companies’ frontier AI capabilities.

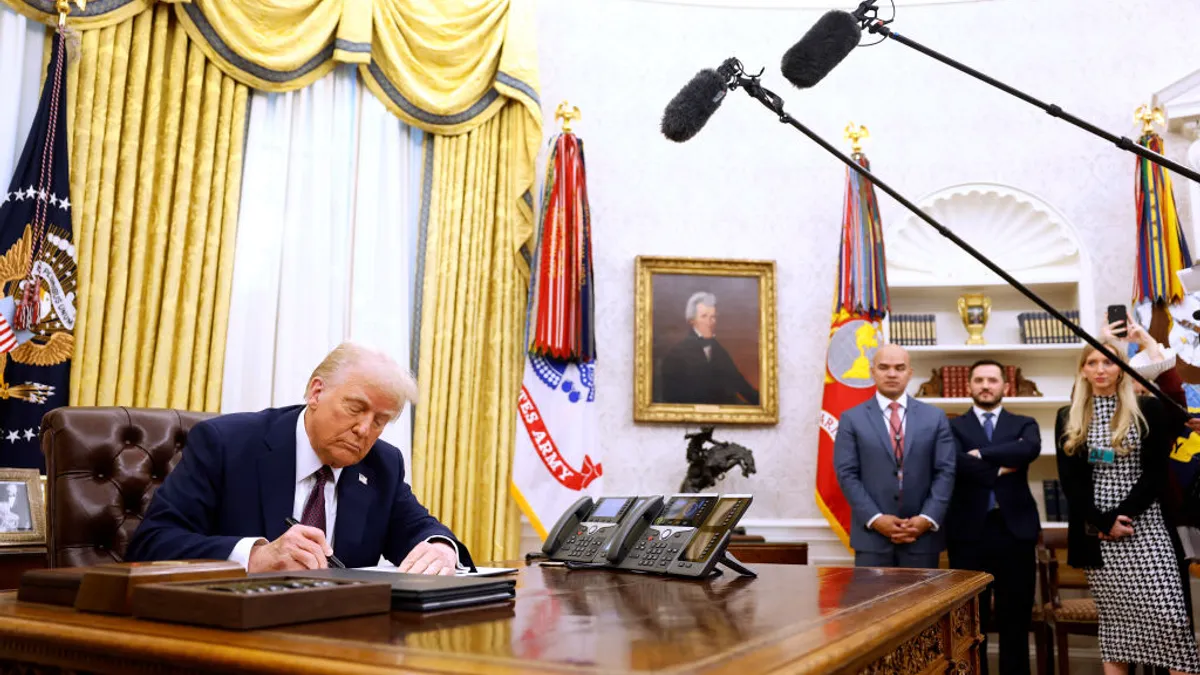

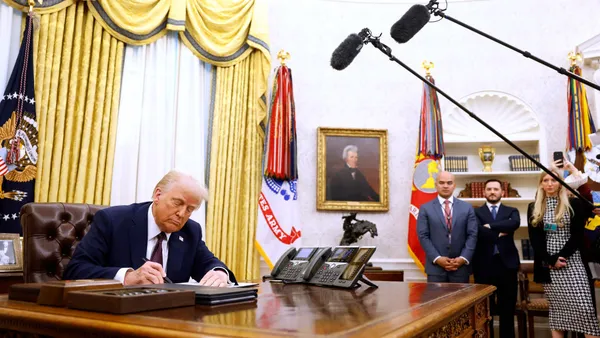

- The agreements build upon previously announced partnerships with OpenAI and Anthropic with a goal of strengthening AI security. Terms of the agreement have been updated to include directives from CAISI, the Secretary of Commerce and President Donald Trump’s AI Action Plan from last year.

- The agreement allows the government to evaluate the partnered AI models before they become available to the public and will continue to assess models after deployment. “Independent, rigorous measurement science is essential to understanding frontier AI and its national security implications,” said CAISI Director Chris Fall in an announcement. “These expanded industry collaborations help us scale our work in the public interest at a critical moment.”

Dive Insight:

As the primary government point of contact for the tech industry, CAISI and its industry partnerships support information sharing, product improvements and an understanding of current and future AI capabilities in the U.S. and abroad, NIST said. The partnerships also allow NIST insight into national security capabilities and risks that AI models might provide.

Trump initially took an unregulated approach to AI in the first year of his second term, with a goal of accelerating AI innovation, building domestic AI infrastructure and advancing international AI diplomacy and security.

But The New York Times reported Monday the administration is seeking to create more regulatory oversight for AI, as policymakers face pressure from national security officials on the risks posed by powerful AI models, such as Anthropic’s Mythos. Initiatives such as Project Glasswing, Anthropic’s effort to identify and remedy software vulnerabilities, further underscore a growing push to scale governance alongside tech adoption.

The expansion of the CAISI framework signals that sovereign alignment will be a mandatory metric of AI procurement for enterprises, Nick Patience, VP and practice lead of AI platforms at The Futurum Group, said in an email to CIO Dive.

In March, the Department of Defense formally designated Anthropic a security risk, a decision that was backed by federal judges last month, despite the company’s partnership with CAISI’s evaluation process. It proves that a vendor can still be sanctioned if its internal ethics clash with national security mandates, Patience said.

For CIOs, the new agreements with Google, Microsoft and xAI are a form of political insurance, Patience said. It’s a “massive contagion risk” to choose a vendor that hasn’t secured favored status from the Department of Commerce and NIST, especially for an enterprise that has or wants federal contracts.

“We have entered an era where a model’s utility to the state is a key predictor of its long-term viability in the enterprise stack,” he said.