Dive Brief:

- Google Vision is the closest image recognition engine to achieve "what a human thinks" in generating image descriptions, according to a Perficient Digital report.

- As part of the study, users were asked which descriptions (anonymously provided by either engine-generated tags or human-generated tags) most accurately matched the image. Human hand tagging received the most votes, 573, followed by Google Vision, with 217. The study included more than 2,000 images assessed by AWS Rekognition, Google Vision, IBM Watson and Microsoft Azure Computer Vision.

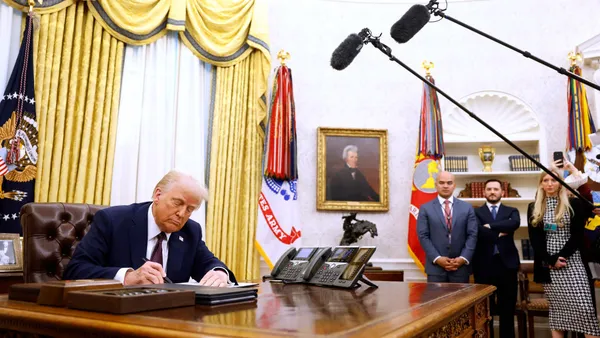

- The study tested each engine's ability to accurately identify tags from 500 images: charts, landscapes, people and products. Human hand tagging had nearly 90% accuracy, followed by Google Vision's 82% accuracy. IBM Watson, however, scored the lowest with 56% accuracy.

Dive Insight:

The use of image recognition technology is sweeping across industries, from marketing to city law enforcement. But fears and concerns are cropping up about how sectors are using the technology.

Perficient Digital's study reaffirmed concerns that engines are not as efficient as humans in describing images. However, when evaluating each engine's "confidence levels," the study found Amazon, Google and Microsoft had more confidence in 90% of their tagging than the tagging done by human hand.

The discrepancy between a human and a machine's ability to appropriately label could have unintended consequences, and critics of image and facial recognition technology warn of biases.

The Federal Bureau of Investigation houses one of the largest government repositories for facial recognition, totaling up to more than 36 million images. However, the Government Accountability Office recently concluded the bureau lacks sufficient information on the accuracy of its facial recognition technology capabilities.

Still, despite criticism over image and facial recognition technologies, more use cases are emerging. In June, Amazon received a patent for drone technology to conduct "surveillance as a service."

Despite the scrutiny around image recognition technologies, companies consider computer vision a necessary artificial intelligence application. Vendors, including IBM, Google and Microsoft, are attempting to remedy concerns by building tools that detect algorithmic biases in AI.